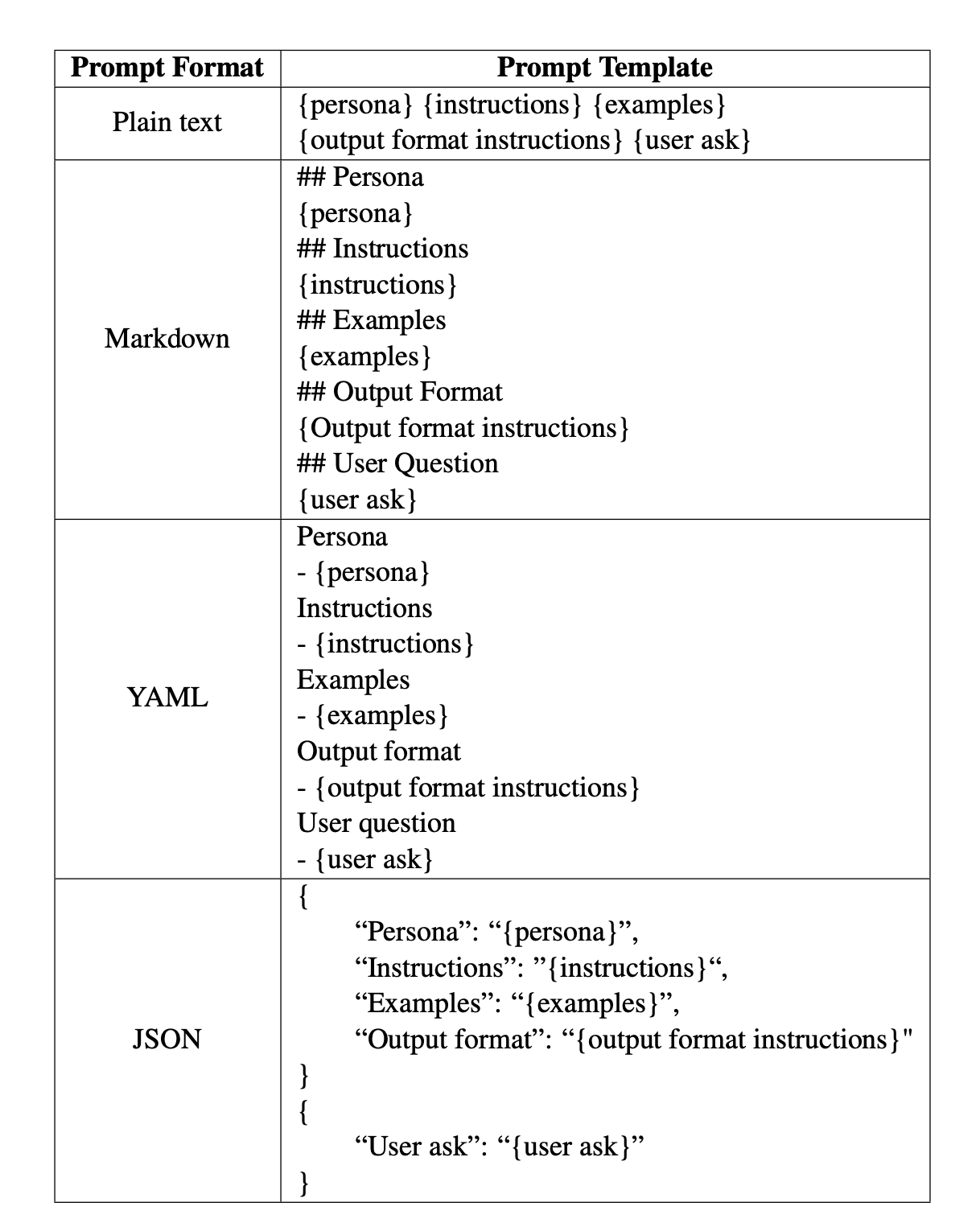

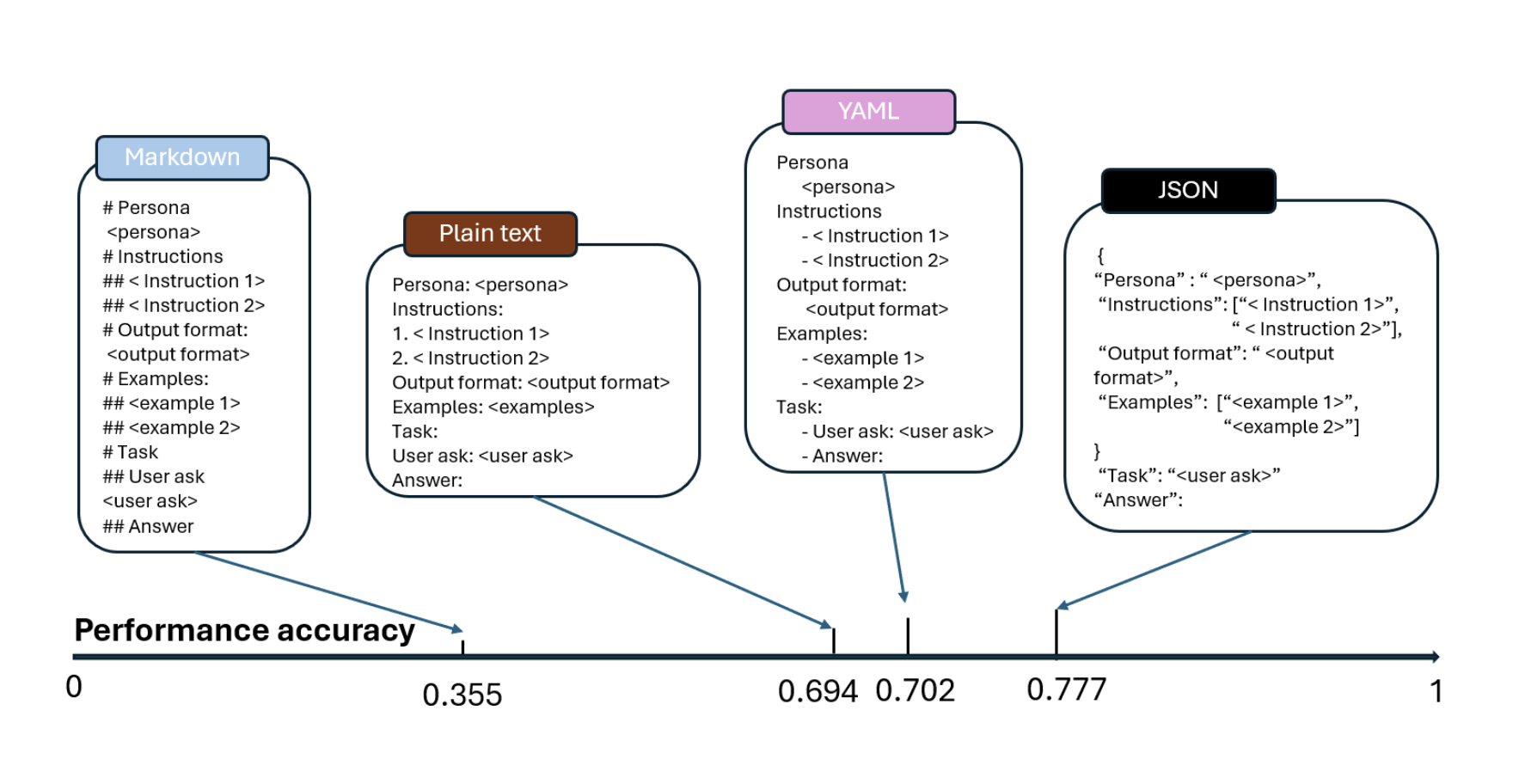

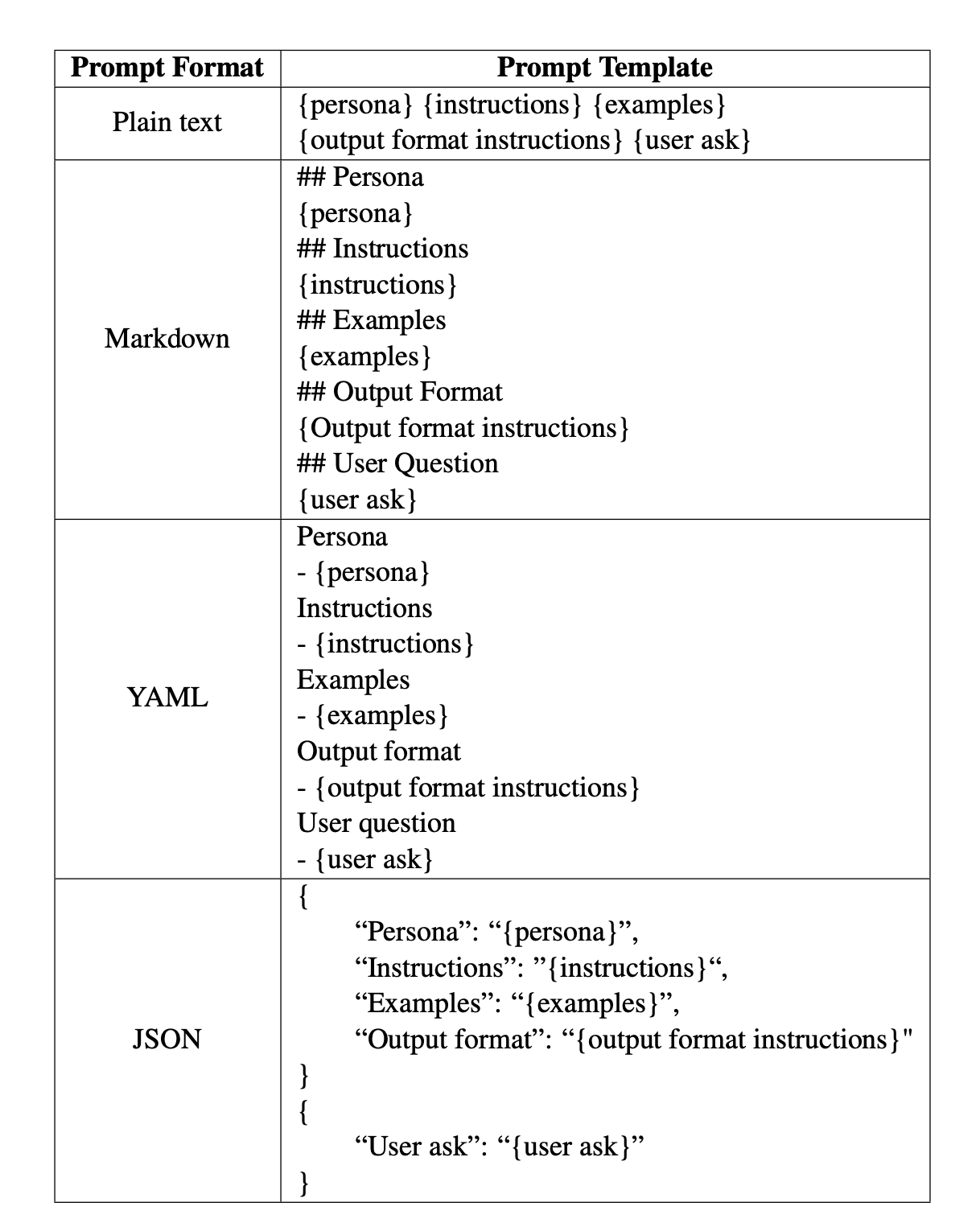

The study found huge accuracy differences based purely on formatting. This means that even if the content of a prompt stays the same, simply changing how it is structured can make AI models give better or worse answers.

- Performance varied by up to 40%!

- Example: In a reasoning test, JSON formatting improved accuracy by 42% compared to Markdown.

- In a coding task, GPT-4 struggled with JSON but performed well in Markdown.

These findings highlight that AI does not process all information equally. The way you structure your input can make or break how well AI understands and responds.

There was no one-size-fits-all formatting solution. The best format depended on the task and model being used.

For example:

- If you’re asking an AI to analyze financial reports, JSON might work better because it organizes numbers and categories clearly.

- If you’re asking AI to generate long-form text, Markdown might be the best choice because it breaks content into sections and makes it easier to process.

This has massive implications for AI users. Whether you’re a software developer, researcher, or business professional, simply testing different prompt formats could lead to significantly better AI performance.